Engineers rely on soil resistivity testing as one of the most critical steps in designing an effective cathodic protection system. This soil resistivity test method provides the data needed to evaluate soil conditions and design reliable corrosion protection systems.

Accurate soil resistivity data directly impacts the anode selection, system performance, and long-term corrosion control. Incomplete or inaccurate data leads to underperforming systems, premature failure, and costly redesigns.

It also serves as a foundational input to cathodic protection design, helping engineers develop systems based on accurate field data.

It also serves as a foundational input to cathodic protection design, helping engineers develop systems based on accurate field data.

This article explains how engineers perform soil resistivity testing, when they need it, and how the data supports real-world cathodic protection design decisions.

What is Soil Resistivity Testing and Why It Matters for CP Design

One of the most important design parameters when considering the application of cathodic protection for buried structures is the resistivity of the soil. Engineers use soil resistivity testing to assess the corrosivity of the environment surrounding buried structures.

Soil conditions directly influence:

- System type

- Anode quantity

- System configuration

Without accurate soil resistivity data at both the structure and proposed anode locations, engineers risk designing ineffective cathodic protection systems that require costly remediation after commissioning.

How Soil Resistivity Determines Soil Corrosivity

Soil resistivity is the primary diagnostic factor for evaluating soil corrosivity. When engineers perform soil resistivity testing, they also consider factors such as:

- Soil composition

- Moisture content

- pH

- Chloride and sulfate concentrations

- Redox (oxidation-reduction) potential

While comprehensive soil analysis may be required for failure investigations, soil resistivity testing provides the most practical and widely used field measurement for cathodic protection design.

Soil Resistivity Ranges and Corrosivity Classification

Below is a typical chart correlating soil resistivity with soil corrosivity.

| Soil Resistivity (ohm-cm) | Corrosivity Rating |

| >20,000 | Essentially non-corrosive |

| 10,000 to 20,000 | Mildly corrosive |

| 5,000 to 10,000 | Moderately corrosive |

| 3,000 to 5,000 | Corrosive |

| 1,000 to 3,000 | Highly corrosive |

| <1,000 | Extremely corrosive |

SOURCE: Corrosion Basics: An Introduction, NACE Press Book, 2nd edition by Pierre Roberge

How Soil Resistivity Testing Works

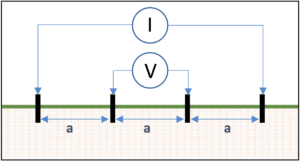

While there are several methods for measuring soil resistivity, the most common field testing method is the Wenner four-pin method (ASTM G57).

This method uses four metal probes, driven into the ground and spaced equidistant from each other. The outer pins are connected to a current source (I) and the inner pins are connected to a volt meter (V) as shown in Figure 1.

Soil Resistivity Formula and Calculation

Engineers convert measured resistance (R) into resistivity using a standard soil resistivity testing formula:

ρ=2×π×ax 30.48 cm/ft. ×R = 191.5 x a x R

Where:

- ρ = soil resistivity, Ω-cm

- a = probe spacing, ft.

- R = measured resistance, Ω

How Probe Spacing Relates to Testing Depth

Each probe spacing represents the average soil resistivity to a depth equivalent to that spacing. For example, a 5-foot spacing reflects the average resistivity at approximately 5 foot deep.

For cathodic protection system design, it is common to take multiple soil resistivity tests with various probe spacings.

For shallow anode placement, it is usually sufficient to take reading readings at 2.5 ft, 5 ft, 10 ft, 20 ft, 25 ft. For deep anode applications, soil resistivity measurements may be recommended at much deeper depths corresponding with the anticipated depth of the deep anode system.

Understanding Soil Layer Effects in Resistivity Testing

It is important to note that the soil resistivity values generated from the four pin testing represent the average soil resistivity from the earth surface down to the depth, and each subsequent probe spacing includes all of the shallow resistance readings above it.

Using the Barnes Method to Interpret Layered Soil Resistivity

To isolate resistivity at specific depths, engineers apply analytical techniques such as the Barnes method. This method:

- Converts resistance to conductance

- Evaluates incremental changes between readings

- Calculates true layer resistivity

Engineers rely on this approach to identify low-resistivity zones that significantly impact cathodic protection performance.

Example of Layered Soil Analysis

For the Barnes analysis below, the data shows that a low resistance zone exists between 60m depth and 100m depth.

| TEST DATA | BARNES ANALYSIS | ||||

|

Spacing a |

Resistance (ohms) |

Conductance 1/R (Siemens) |

Change in Conductance (Siemens) |

Layer Resistance (ohms) |

Layer Resistivity |

| 20 | 1.21 | 0.83 | — | 1.21 | 152 |

| 40 | 0.90 | 1.11 | 0.28 | 3.57 | 441 |

| 60 | 0.63 | 1.59 | 0.48 | 2.08 | 264 |

| 80 | 0.11 | 9.09 | 7.5 | 0.13 | 17 |

| 100 | 0.065 | 15.38 | 6.29 | 0.16 | 20 |

| 120 | 0.058 | 17.24 | 1.86 | 0.54 | 68 |

Choosing the Right Soil Resistivity Testing Equipment

Engineers must use the right soil resistivity testing equipment to collect accurate field data. Electrical noise from power lines, substations, railroad tracks, and many other sources can distort readings if not properly managed.

Modern soil resistivity meters include filtering capabilities to reduce interference and improve measurement accuracy.

High-Frequency Soil Resistivity Meters for Shallow Testing

High-frequency meters operate above 60 Hz and should be limited to data collection of about 100 feet in depth.

This is because they lack sufficient voltage to handle long traverses and they induce noise voltage in the potential leads which cannot be filtered out as the soil resistivity decreases and the probe spacing increases.

Engineers commonly use these meters for:

- Shallow testing

- Corrosion assessment

- Shallow anode design

They offer a cost-effective solution but have limitations in deeper applications.

Low-Frequency Soil Resistivity Meters for Deep Testing

Low-frequency meters operate between 0.5 to 2.0 Hz and support deeper soil resistivity testing.

These meters:

- Handle large probe spacing

- Provide superior noise filtering

- Deliver accurate readings at greater depths

Engineers typically prefer low-frequency meters for deep anode cathodic protection design.

Field Best Practices for Accurate Soil Resistivity Testing

Accurate soil resistivity testing depends on proper field procedures and site conditions.

- Select the Right Testing Location. The use of the Wenner four pin testing method requires sufficient open area to properly space the pins to collect data to the depths necessary. For deep anode cathodic protection systems this would require a minimum of three times the anticipated anode system depth.

- Avoid Interference from Buried Piping and Other Metallic Objects. The presence of any buried metallic structures (piping, conduit, reinforced concrete structures, grounding systems, etc…) provides low current paths that could cause a short-cutting effect that would distort the resistance readings and yield an erroneous soil resistivity reading.

- Control Probe Depth for Accurate Readings. It is important that the probes are properly inserted into the earth. For shallow resistivity readings, probes that are driven too deep can impact the shallow readings. Ideally, the pins should be no deeper than 1/20th of the spacing between the pins and no more than 10 cm (4 inches) deep.

- Minimize Electrical Noise During Testing. Soil testing should not be performed directly under high voltage transmission systems or near other outside sources of current in the soil such as DC light rail systems.

- Record Soil Conditions and Environmental Factors. It is important that the location of the testing is accurately recorded along with the soil conditions and temperature at the time of testing. Testing should not be performed in frozen soil, or during periods of extreme drought or abnormally wet conditions.

When to Use Professional Soil Resistivity Testing Services

Professional soil resistivity testing for cathodic protection becomes critical when projects require accurate field data to support system design, deep anode installations, or corrosion troubleshooting.

Engineers rely on experienced providers to perform soil resistivity tests, interpret layered soil conditions, and deliver reliable data for CP design.

Working with a qualified team helps:

- Reduce design risk

- Improve system performance

- Avoid costly rework

Key Takeaways: Soil Resistivity Testing for CP Design

Soil resistivity testing provides the most reliable indicator of soil corrosivity for buried structures and plays a critical role in cathodic protection system design.

Engineers most commonly use the Wenner four pin method to perform soil resistivity tests. When properly collected and interpreted, teams can design CP systems that perform reliably and efficiently.

Get Expert Soil Resistivity Testing Support

Learn about MATCOR soil resistivity testing services and cathodic protection design services.

Very good , clear and simple explanation

My husband would like to purchase a property where he can have a building built. I agree with you that the application of cathodic protection of buried structures must be used for the resistivity of the soil. Better yet, we’ll just hire a commercial erosion service so we’ll know if the soil is in good shape.

Rachel – Thank you for your comment. As a general rule, soil corrosivity is not a significant concern for most building structures. There are plenty of other soil concerns including structural stability, environmental assessments, etc… that should be performed by companies that specialize in these assessment prior to commercial or residential property development. Best wishes! Ted Huck, Director of Manufacturing & QA, MATCOR, Inc.

Hi,

I am writing from Tekfen Construction. Now we are working one Saudi Aramco project. Based new systems that you proposed , we have some query. If you reply, we will appreciate.

1. If previous resistance is same with next one, how we calculate change in conductance ( looks 0)?

2. If previous conductance (1/R) is higher than next one, how we calculate change in conductance ?

3. What will be layer resistance for 2 questions?

Dear Osman:

Based on your question, it seems as though you are trying to calculate soil layer resistivity using the Barnes layer calculations. The Barnes layer method uses very broad assumptions:

1. The horizontal layers are homogenous

2. The thickness of the horizontal layers are even and level (parallel) with the surface of the earth

3. The resistance readings that you collect are a result of the test current going deep into the earth and traveling through all of the target layers

If the target soil layers are not homogenous, are not level, or vary in thickness, then the readings will be affected.

Also, standard soil resistivity meters – which operate on low voltage and high frequency – are only reliable for electrode spacings up to 15 meters in most soils, and a maximum of 30 meters. Many of these meters do not have the sensitivity to measure low resistances accurately. I have found this to be a major source of error.

Hope this information helps.

Best regards,

Kevin Groll

Engineering Manager

MATCOR, Inc.

Dear Sir,

Thank you so much your explanation and review my knowledge .

Best wishes,

Taufik

do you have a method for non-uniform thickness layers…we ran “a” spacing of 1, 2, 5 and 10 ft (looking at really shallow variations)…

Dear John,

Thank you for your inquiry. The Barnes layer method is a theoretical construct based on a range of assumptions and is useful for extrapolating resistivities at depths where core sampling may not be practical. For a more detailed understanding of this methodology, we would refer you to https://onlinepubs.trb.org/Onlinepubs/hrbbulletin/65/65-002.pdf . If you are looking at shallow variations as your post suggests, it should be quite feasible to collect actual soil samples at each of those depths and use a soil box to measure in-situ resistivity data.

Hope this is helpful. Let us know if we can be of further assistance.

MATCOR, Inc.

The 68 ohm-m layer resistivity at 110m spacing is incorrect. All other values are correct. From my calculation, the correct value is 34 ohm-m and not 68 ohm-m as shown in the example. Kindly cross-check your result.

Thanks

Udeme,

We appreciate your input on our article regarding soil resistivities and Barnes Layer calculations. There were some errors in the results table for the sample calculations, and we have updated the table. Please continue to provide your input and comments as we strive to make the MATCOR website a great technical resource for our customers and for the industry in general.

Best regards,

MATCOR, Inc.

what is the proper or recommended soil resistivity for magazines?

Dear Elijah Nchidzi,

Thank you for your inquiry. Magnesium anodes are typically used in soil applications with resistivities ranging from 2000 oh-cm to 7500 ohm-cm. Soil resistivities that are higher than 7500 ohm-cm often require a large quantity of anodes to reduce the overall anode to earth resistance so that sufficient current can be discharged to protect the intended structure. Conversely, soil resistivities below 2000 ohm-cm often result in high current discharge and shorter anode life. Of course, there are exceptions to this general guideline, and anytime we are considering galvanic anodes it is critical that a thorough design be performed, as there is no “volume” switch on a galvanic anode to increase (or decrease) the current output.

We hope this is helpful. Please let us know if you have further questions.

Best regards,

MATCOR, Inc.

If soil resistivity is found to be 66 ohms – cm how can I incorporate this result in designing of CP system based on HSCI Anodes for buried section of piles.

Dear Raza,

We thank you for the question you posted on our website. Soil resistivity value is used to determine the cathodic protection current requirement and ground bed resistance calculations for your ICCP system.

Best regards,

MATCOR, Inc.

Dear Raza,

While working on a grounding design I encountered a peculiar problem.

I know from Geotech data that the site I am working on has hard rock from 5 feet depth to 15 feet depth.

The resistivity of rock is supposed to be 10000 Ohm-m.

However with Barnes calculations for 0-5 feet layer the resistivity is 153 Ohm-m; 5 to 10 feet layer it is 194 Ohm-m and 5 to15 feet layer it is 59 Ohm- m.

Why Barnes layer resistivity is not comparable with actual rock resistivities of 10000 Ohm-m

Regards

rg

Dear Mr. AarGee,

Thank you for your inquiry. Without understanding the equipment and the data methodology used to collect the data being input into the Barnes layer calculations, it is not possible for us to comment on the results. If accuracy of the measurements that you are taking vary significantly from what you know to be true in the environment you are testing, it would appear that something was not performed during the field testing. We would suggest that you review your processes and the equipment that you are using and retest the area in question.

Best regards,

MATCOR, Inc.

Dear,

Nice Explanation..

thank you,

regards,

kannan